Read our most popular articles

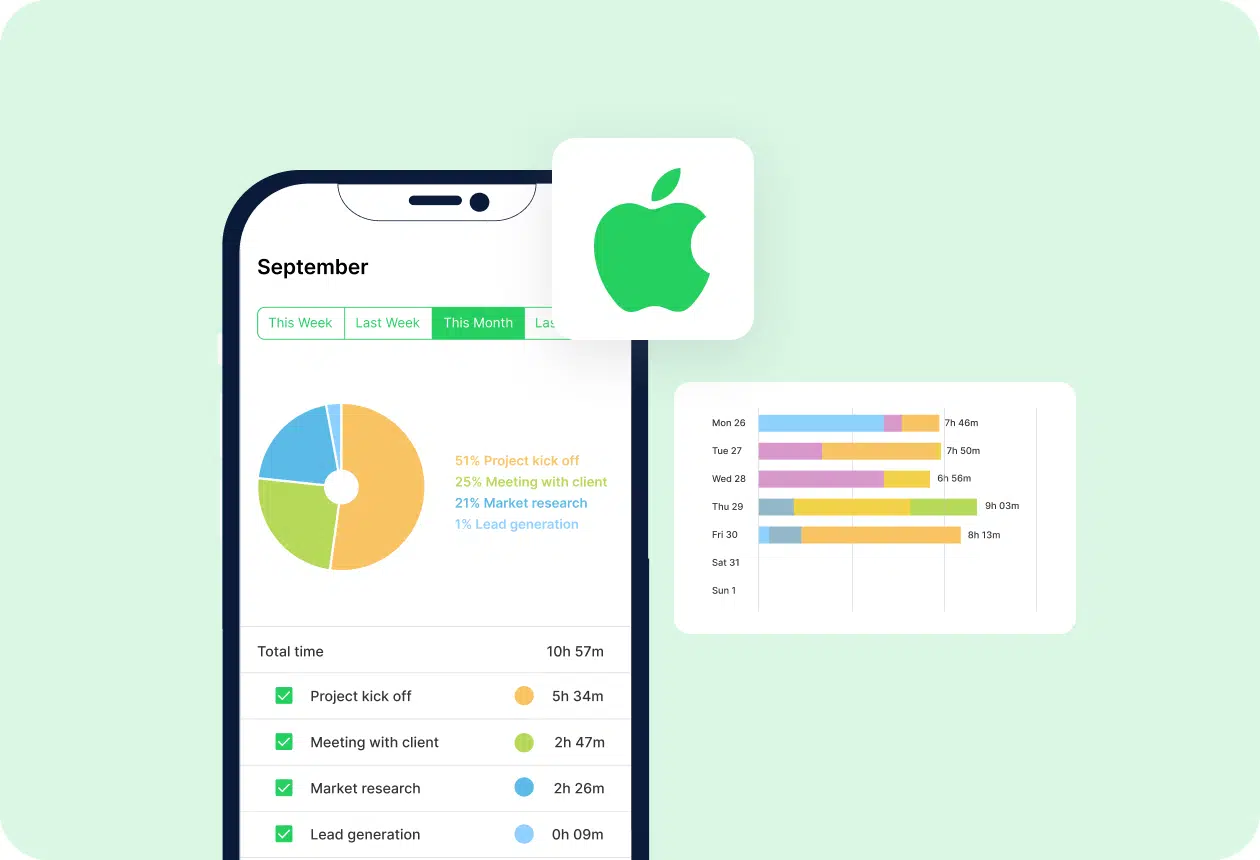

With a dedicated Mac time tracker, you can better manage your time, become more productive, and grow your business. Check the best time tracking software for Mac.

Paper time cards have become a thing of the past. If in today's world you don't use a timesheet app, you will stay behind. Here are the best timesheet apps to use in 2025!

Flexible working hours, also called a flexible work schedule, have become one of the most appreciated employee benefits these days, especially among remote workers and among e.g., high employee salary and employment insurance. What’s behind its popularity, and is it actually more advantageous than the nine-to-five work schedule? Before we jump to conclusions, regardless of […]

All blog posts

A simple time tracking app is a great solution for everyone who wants to easily track time. It can be a great alternative to advanced project management tools that offer robust features aimed mostly at larger businesses. It’s not easy to track time when you get too many functionalities. Sometimes, a simple, free tool to […]

In many jobs, overtime hours are an inevitable part of work. In fact, regular and overtime hours are the most legally mandated records employers must maintain. Overtime tracking is essential for complying with federal and labor laws. What’s more, employers are required by law to compensate employees for overtime work according to the overtime rate […]

In today’s work setting, it’s more important than ever to prioritize tasks. Constant demands, notifications, disruptions, and short deadlines can easily create an illusion of productivity—an environment in which you do all the tasks at once (which is impossible). But as the saying goes, “jack-of-all-trades, master of none.” Effective professionals don’t do more—they do fewer […]

Competitor Tool Reviews, Productivity

Maintaining high retail productivity in today’s world can be extremely challenging. time pressure, staff turnover, multitasking, unpredictable days, and shifting priorities make it hard for retailers to generate sales. On top of that, there’s the need to keep up with the pace and always stay on top. In the era of technological advancement, it can […]

Wonder what the best Timeular alternatives are currently available on the market? We’ve got you covered! If you’re looking for an effective way to manage your work hours, you may have come across Timeular. This popular time-tracking tool has an unusual concept: in addition to the standard app (Early), it offers a physical tracker in […]

Looking for the best Jibble alternatives? We’ve got you covered! Although Jibble is one of the most popular time tracking tools, not everyone is happy with its approach to functionality, privacy, or customer support. The good news? There are plenty of solid solutions on the market that not only let you track time but also […]

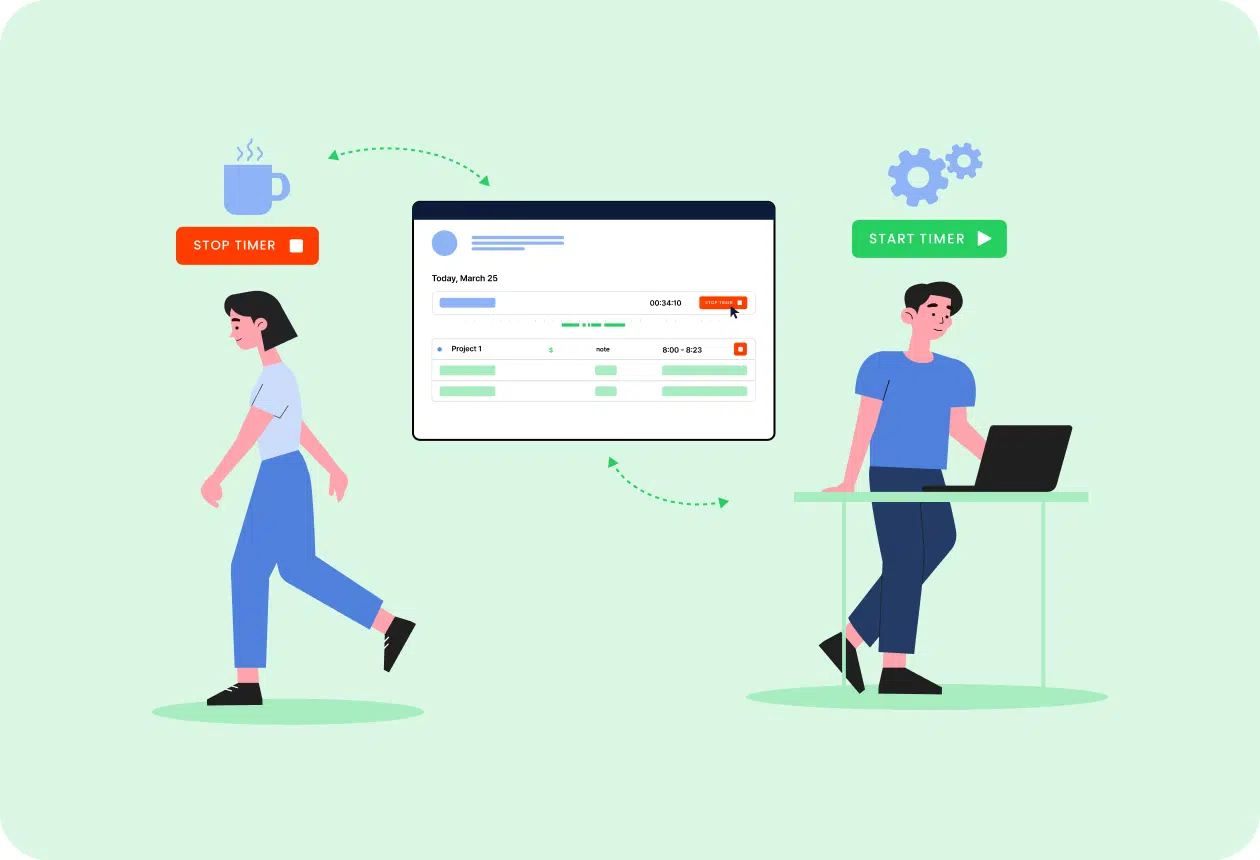

Know where your time goes with TimeCamp!

Track time in projects and tasks, create reports, and bill your clients in just one tool.

Sign up for free

Are you looking for the best Harvest alternatives? If so, you are spoiled for choice, especially if you’re looking for a tool that offers advanced project management features. While Harvest is a great tool, it lacks some functionality on the project management end, which makes some companies look for better, more comprehensive alternatives: Alternatives to […]

Are you looking for Best Toggl alternatives? If so, you have many other great tools to choose from! And don’t get us wrong, Toggl is not a bad tool. Many users value it because it automatically records work hours with a simple online timer. It’s also good for generating timesheet reports, analyzing your team’s productivity, […]

Are you looking for Time Doctor Alternatives? You’re in the right place! There are many automatic time-tracking tools out there for your business and if, for whatever reason, you think that Time Doctor is not a good fit, there are some worthy alternatives that will help you with time tracking and productivity monitoring in your […]

The best billable hours tracker tools have become indispensable for freelancers, agency teams, and consultants who want to maximize productivity and ensure every minute is accounted for. By capturing every task—from client calls to project planning—these solutions help you avoid the guesswork of billing and eliminate revenue leakage. In today’s fast-paced environment, investing in the […]

You’re a freelancer, you’re working the 9-5, or a small business juggling project work, admin, maybe a small clutch of employees, and—of course—life. Or you’re a large employer wanting to make sure the juggling’s being done right (read: efficiently). How? Simple—employee time tracking software and best timesheet apps. Now, call us biased (we get it), […]

Sending invoices is simultaneously exciting, taxing, time-consuming, and—depending on the client—daunting. Handled manually, it’s a painfully administrative affair involving working out costs and taxes—and that’s before we get into bookkeeping and chasing down tricky clients. It’s certainly one of the less glamorous aspects of business. Which is why invoice software—and particularly the best free invoice […]

Productivity killers are omnipresent. But what is actually undermining your employee productivity? It’s probably not what you think. While obvious problems like poor time management get most of the attention, it’s often the unexpected, structural issues that quietly erode focus and performance. This article reveals five less obvious but common productivity killers that affect modern […]

Free clock in and out apps can be a real lifesaver for teams who need to track clock-ins. If your business still relies on cumbersome spreadsheets for employee time tracking, you’re likely familiar with the daily struggles of formula errors, version control nightmares, and the inevitable moment when someone accidentally deletes half the payroll data. Fortunately, […]

According to a 2025 survey by ADDitude, 56.59% of adults with ADHD said procrastination is their biggest time management struggle, and one-third reported that time management issues cause the most stress in their lives. That’s why many turn to the Best Apps for ADHD Time Management to regain control, boost focus, and reduce daily overwhelm. […]

The right Clockify alternative can help boost performance of your team and grow your business. Check the best apps!

Want to know what the best productivity apps for iPhone are? If you’re juggling work deadlines, personal errands, and trying to squeeze in some me-time, your iPhone can be a lifesaver. With the right tools, it is more than just a phone—it’s a productivity powerhouse. In this guide, we’ve rounded up the best iPhone productivity […]

Are you tracking time spent on different activities and projects in your company? It’s always a good idea to do so, primarily because this way, you can monitor your company’s performance and employee productivity. Time-tracking software can give teams a complete overview of their daily, weekly, and monthly work. Especially, if the company has chosen […]

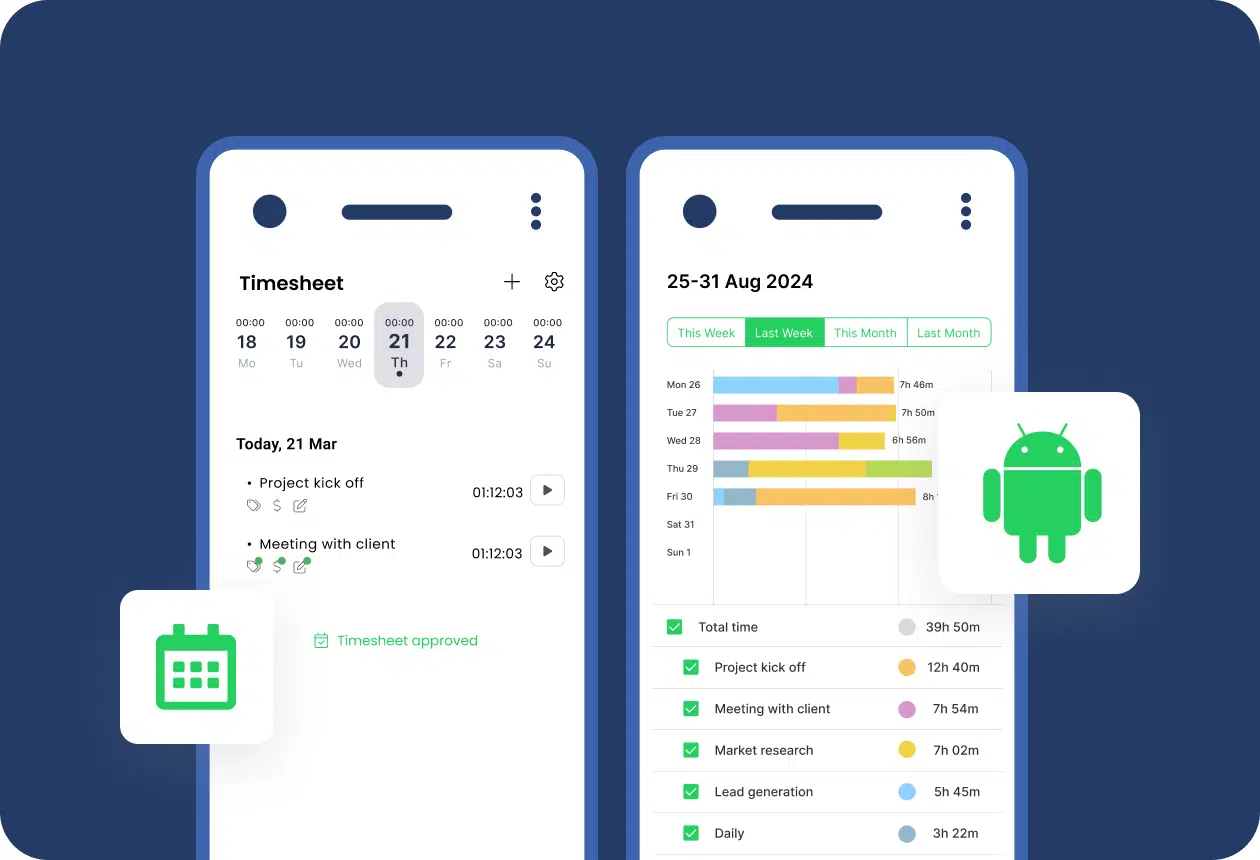

Android is the dominant platform in most countries—three-quarters of all smartphones in the world run Android and it has over 3.5 billion active users. With so many people using it daily, more and more apps are available to download. One of such are best Android time tracking apps. However, with the overabundance of tools, you […]

Best Calendar apps for Android are simply must-haves for the majority of consultants, freelancers, and professionals out there. And while some people still prefer traditional, printed planners, the truth is that their digital equivalents are more useful and flexible. They can also be integrated with other tools that you use, e.g., your website’s booking system. […]

Expense tracking software solution is a crucial tool for every business because finances are an inseparable part of every company. You need to keep track of receipts, categorize spending, and ensure compliance with budgets or tax regulations. These procedures often eat up valuable time and energy. Fortunately, expense tracking software simplifies this process, offering intuitive […]

Employee management is a core part of running any successful business. It includes not only all HR formalities but also smart practices aimed at increasing work efficiency. Today, it is difficult to imagine employee management without functional software. It minimizes the admin burden on HR and allows you to unlock the full potential of the […]

“There are constant pressures toward unproductive and wasteful time-use.” – Peter Drucker, The Effective Executive, 1967 TLDR: I Tracked 5995 Hours of My Life in a Year — Here’s What I Learned I tracked all my time for a year (5995h, without sleep) using a calendar and 15 types of activities. I’m pretty happy about […]

Whether you are a new business owner looking to understand the costs of an employee, this article is for you.